Everything you need to know about Fine-Tuning and RAG — how each works, when to use each, the key differences, and which strategy wins for your specific use case in 2026.

Fine-Tuning vs RAG by the Numbers 2026

| 96%Hybrid Model Accuracy | 87%RAG Factual Accuracy | 94%Fine-Tuning Domain Accuracy | 85%RAG Hallucination Reduction | 60%Cost Saving with Hybrid |

Table of Contents

1. What Are Fine-Tuning and RAG?

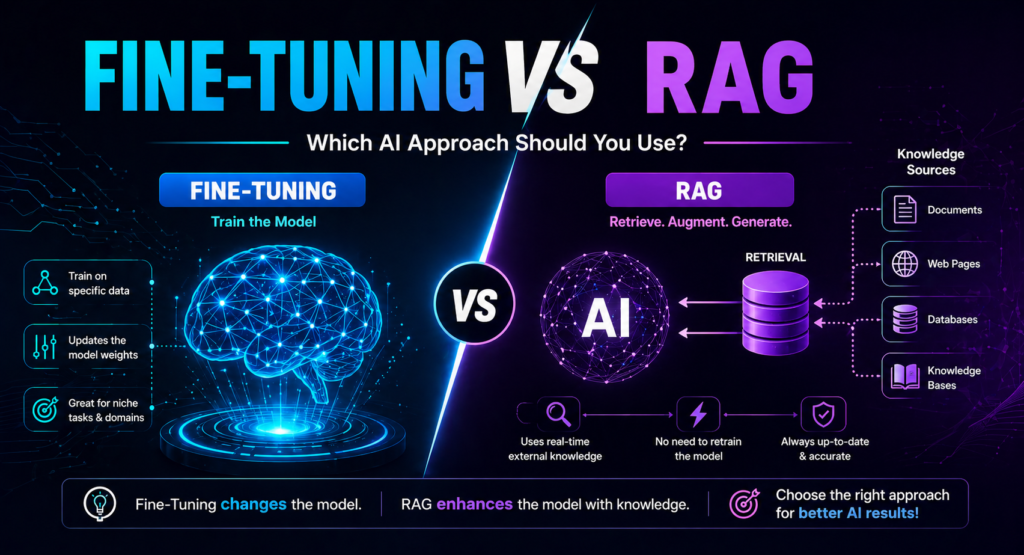

Fine-Tuning and Retrieval-Augmented Generation (RAG) are the two primary methods enterprises use to get more value out of large language models. Both work by tailoring an LLM to specific use cases, but the methodologies behind them differ fundamentally — and choosing the wrong approach can mean outdated knowledge, inaccurate responses, or a model that simply fails to capture your domain’s nuance.

Fine-Tuning is the process of adapting a pre-trained LLM by further training it on a curated, domain-specific dataset. This adjusts the model’s internal weights so that specialized knowledge and desired behaviors become baked directly into the model itself. RAG, by contrast, keeps the base model completely unchanged. Instead of modifying the model, RAG connects it to an external knowledge base at the moment a user asks a question — retrieving relevant documents and feeding them as context into the model’s response generation process.

| Pro Tip The fastest way to understand the difference: Fine-Tuning is like sending an employee back to university to learn a new specialization. RAG is like giving that same employee access to a real-time reference library whenever they need to answer a question. Both improve performance, but through completely different mechanisms. |

2. How Fine-Tuning Works

Fine-Tuning works by taking a pre-trained foundation model — such as GPT-4, Claude, or Llama — and running additional training passes on a carefully curated dataset specific to your domain or task. During this process, the model’s weights and parameters are adjusted to reflect the new training data. The result is a model that has internalized the domain’s terminology, reasoning patterns, output formats, and behavioral norms at a deep level.

The Fine-Tuning process follows four stages. First, you select a base model with sufficient general intelligence for your use case. Second, you prepare a high-quality domain-specific dataset — typically thousands to millions of question-answer pairs, documents, or task examples. Third, you run training using GPU compute, which adjusts the model’s internal parameters through backpropagation. Fourth, you evaluate and deploy the fine-tuned model, which now generates responses that reflect its specialized training without requiring explicit instructions in every prompt.

| Fine-Tuning Stage | What Happens | Key Requirement |

| 01 Base Model Selection | Choose a foundation LLM sized for your task | Capability match to domain complexity |

| 02 Dataset Preparation | Curate high-quality domain-specific training examples | Thousands of clean, labeled examples minimum |

| 03 GPU Training Run | Model weights adjusted via backpropagation passes | High-performance GPU compute — expensive |

| 04 Evaluation | Test fine-tuned model against benchmark tasks | Domain expert review plus automated metrics |

| 05 Deployment | Serve the specialized model in production | Standard inference infrastructure |

| Pro Tip Fine-Tuning is significantly more powerful when you have a stable, well-defined domain with high-quality training data. A poorly curated training dataset will produce a worse model than the base model — garbage in, garbage out applies with even more force in fine-tuning than in standard prompting. |

3. How RAG Works

Retrieval-Augmented Generation (RAG) was introduced by Meta AI in a landmark 2020 research paper titled Retrieval-Augmented Generation for Knowledge-Intensive Tasks. Rather than modifying the model’s weights, RAG enhances responses by connecting the LLM to an external knowledge base at inference time — whenever a user submits a query.

The RAG process operates through four key stages. When a user submits a query, the system converts it into a vector embedding and searches a vector database for semantically similar documents. The most relevant retrieved documents are then combined with the original query and fed into the LLM as context. The model generates a response grounded in both its training knowledge and the retrieved, up-to-date information — and can cite its sources so users can verify every answer.

| RAG Stage | What Happens | Key Advantage |

| 01 Query Received | User submits a question or request | No model retraining needed |

| 02 Vector Search | Semantic search across knowledge base | Finds by meaning, not just keywords |

| 03 Document Retrieval | Most relevant docs returned by vector DB | Always uses most current information |

| 04 Context Injection | Retrieved docs combined with query as context | Grounds response in authoritative sources |

| 05 Grounded Response | LLM generates answer citing retrieved sources | Transparent, verifiable, auditable output |

| Pro Tip RAG systems can reduce hallucination rates by up to 85% compared to baseline LLM responses, because the model synthesizes from retrieved documents rather than generating from internal patterns. When the answer exists in your knowledge base, retrieval finds it — the model does not have to guess. |

4. Fine-Tuning vs RAG: Side-by-Side Comparison

The most important architectural difference between Fine-Tuning and RAG is where the knowledge lives. In Fine-Tuning, knowledge is embedded into the model’s parameters — it becomes part of the model itself. In RAG, knowledge lives in an external database that is queried at inference time. This single difference cascades into every practical tradeoff between the two approaches.

| Factor | Fine-Tuning | RAG |

| Knowledge Location | Baked into model weights | External vector database |

| Handles New Data | Requires full retraining | Auto-updated — refresh the index |

| Upfront Cost | High — GPU compute required | Low — uses existing models |

| Ongoing Cost | Low inference cost post-training | Retrieval cost per query |

| Factual Accuracy | 94% on domain-specific tasks | 87% on factual knowledge tasks |

| Hallucination Risk | Medium — recalls from parameters | Low — grounded in retrieved docs |

| Source Attribution | Not possible — black box | Full citation of every source |

| Data Governance | Complex — data baked into weights | Strong — access controls at query time |

| Deployment Speed | Weeks to months | Days |

| Technical Skill Needed | ML engineering expertise | Database and retrieval engineering |

| Pro Tip Neither Fine-Tuning nor RAG is universally superior. RAG consistently outperforms Fine-Tuning for factual accuracy on frequently changing information. Fine-Tuning consistently outperforms RAG for style consistency, behavioral control, and tasks requiring deep domain reasoning on stable knowledge. The best architects choose based on their specific requirements. |

5. Full Scorecard Across 8 Decision Factors

When evaluating Fine-Tuning versus RAG for a production AI application, eight factors consistently determine the right choice. Understanding how each approach scores across these dimensions gives you a clear, defensible basis for your architecture decision.

• Upfront Cost: RAG wins clearly. RAG implementation requires database and retrieval engineering using existing models. Fine-Tuning demands GPU compute, large curated datasets, and ML engineering expertise — making it three to ten times more expensive to get started.

• Time to Deploy: RAG wins clearly. A production RAG system can be deployed in days using managed vector database services like Pinecone, Weaviate, or OpenAI embeddings. A fine-tuned model requires weeks to months of data preparation, training, and evaluation.

• Factual Accuracy: Hybrid wins, RAG second. Research from Stanford found RAG systems achieve 87% accuracy on factual questions when retrieval precision exceeds 90%. Fine-tuned models reached 94% accuracy on domain-specific tasks. Hybrid systems combining both achieved 96% accuracy.

• Handling New Data: RAG wins decisively. Update your source documents, refresh the vector index, and the RAG system immediately uses current information. Fine-Tuning requires a full retraining cycle to incorporate any new knowledge.

• Style and Behavior Control: Fine-Tuning wins clearly. Fine-Tuning encodes consistent tone, format preferences, and behavioral norms directly into the model’s parameters. RAG has limited control over style and must rely on prompt instructions for every interaction.

• Hallucination Risk: RAG wins. RAG grounds every response in retrieved documents, dramatically reducing the model’s tendency to generate plausible but incorrect information. Fine-tuned models must recall facts from parameters and can hallucinate details not learned well during training.

• Data Governance: RAG wins. RAG enforces access controls at query time — sensitive documents stay in controlled repositories with full audit trails. Fine-Tuning bakes information into weights, making it extremely difficult to remove specific data or control access after training.

• Best For: Use Fine-Tuning for specialist tasks requiring deep domain expertise with stable knowledge. Use RAG for dynamic knowledge bases, customer support, research, and any application where up-to-date information is critical.

6. When to Use Fine-Tuning

Fine-Tuning delivers its strongest advantage in situations where you need deep, consistent domain expertise on a relatively stable knowledge base, and where the upfront investment in compute and data preparation is justified by long-term performance gains.

• Consistent Style and Tone: Your application requires a specific branded voice, response format, or communication style that must be applied consistently across every interaction without relying on prompt instructions.

• Deep Domain Expertise: You are building in a highly specialized field — clinical diagnostics, legal document analysis, financial modeling, or internal API code generation — where the model needs to internalize domain-specific reasoning patterns, not just retrieve facts.

• Stable Knowledge Base: Your domain knowledge changes infrequently. Medical diagnostic criteria, legal frameworks, or coding standards evolve slowly enough that periodic retraining is acceptable.

• Smaller Model Performance: You need a smaller, faster, cheaper model to perform at the level of a much larger general model on a specific task. Fine-Tuning can make a small model match or exceed a large model on narrow tasks, dramatically reducing inference costs at scale.

• Proprietary Behavioral Patterns: You need the model to follow complex, nuanced behavioral rules that are difficult to express through prompting — for example, a financial AI that must apply proprietary analytical methodologies consistently.

| Pro Tip Ideal Fine-Tuning use cases in 2026 include code generation for internal APIs, specialized translation services for proprietary terminology, branded content creation at scale, clinical diagnosis support, financial analysis using proprietary methodologies, and custom SQL query generation from natural language across complex internal schemas. |

7. When to Use RAG

RAG delivers its strongest advantage in situations where knowledge changes frequently, source attribution is important, governance requirements are strict, or you need to get a production system deployed quickly without deep ML engineering resources.

• Frequently Changing Information: Your knowledge base updates daily or weekly — product catalogues, regulatory documents, pricing, policy manuals, or news. RAG automatically uses the most current version without retraining.

• Source Attribution Required: Your application requires every response to cite its sources so users, auditors, or compliance officers can verify the information. RAG enables full citation of retrieved documents. Fine-tuned models cannot attribute specific facts.

• Strict Data Governance: You operate in a regulated industry where sensitive data must remain in controlled repositories with access controls and audit trails. RAG enforces governance at query time. Fine-Tuning bakes data into weights, complicating governance significantly.

• Large and Growing Knowledge Base: Your application needs to search across millions of documents. RAG’s retrieval architecture scales naturally to very large knowledge bases. Fine-Tuning on millions of documents becomes prohibitively expensive.

• Fast Time to Value: You need a working AI system within days or weeks, not months. RAG with managed vector database services can be production-ready rapidly using existing foundation models.

• Hallucination Reduction Priority: Your use case absolutely cannot tolerate factual errors — legal research, medical information, technical specifications. RAG’s grounding in retrieved documents provides the strongest protection against AI hallucination.

| Pro Tip Ideal RAG use cases in 2026 include customer support chatbots, legal research assistants, medical literature search, enterprise knowledge management systems, real-time news analysis, regulatory compliance monitoring, HR policy Q&A systems, and any application where the answer exists in a document and must be delivered accurately and verifiably. |

8. The Hybrid Approach: Combining Both

Figure 4: Fine-Tuning vs RAG Decision Tree — Answer 4 Questions to Find the Right Strategy

In 2026, the most sophisticated AI applications combine Fine-Tuning and RAG to capture the complementary strengths of both approaches. The core insight of the hybrid architecture is that Fine-Tuning controls HOW the model responds — its style, format, and reasoning approach — while RAG controls WHAT information the model responds with — current, accurate, verifiable facts from your knowledge base.

Anthropic’s enterprise customers, for example, often Fine-Tune Claude to match their organization’s communication style, terminology, and response format, then layer RAG on top to access current product documentation, customer records, and regulatory updates. This approach delivered 96% accuracy in recent benchmarks — significantly outperforming RAG-only systems at 89% and Fine-Tuning-only at 91%.

8.1 Real-World Hybrid Architecture Example

A leading e-commerce platform demonstrates the hybrid approach at scale. The company Fine-Tuned a base language model on their brand voice, product description style, and customer service communication patterns. They then layered a RAG system on top connecting to real-time inventory levels, current pricing, live customer reviews, and updated return policies. The result was a customer service AI that responds in perfect brand voice with always-accurate, real-time product information — achieving 98% customer satisfaction scores and processing 50 million queries monthly at 60% lower cost than a pure RAG implementation.

8.2 When to Choose the Hybrid Approach

• Your application requires both consistent branded behavior AND access to frequently updating factual information

• You are building enterprise customer service, e-commerce assistants, or healthcare support systems where both style and accuracy are non-negotiable

• You need the hallucination reduction of RAG combined with the behavioral consistency of Fine-Tuning for high-stakes applications

• Your organization is mature enough in AI to invest in building and maintaining both the fine-tuned model and the retrieval pipeline

| Pro Tip In 2026, leading AI platforms including LangChain and LlamaIndex now offer automated routing that decides per query whether to use RAG, a fine-tuned model, or both. This intelligence layer analyzes query characteristics and optimizes for accuracy, latency, and cost automatically — dramatically simplifying hybrid architecture implementation. |

9. Frequently Asked Questions

What is the main difference between Fine-Tuning and RAG?

The core difference is where the knowledge lives. In Fine-Tuning, knowledge is embedded into the model’s internal parameters through additional training — it becomes part of the model itself. In RAG, knowledge lives in an external vector database that is searched at the moment a user asks a question. Fine-Tuning changes the model. RAG augments the model with external information without changing it.

Which approach is cheaper — Fine-Tuning or RAG?

RAG has significantly lower upfront costs. Implementing RAG uses existing pre-trained models and requires database and retrieval engineering expertise rather than expensive GPU compute and large labeled datasets. Fine-Tuning has high upfront costs in compute, data preparation, and ML engineering, but lower ongoing inference costs once deployed. RAG incurs ongoing retrieval costs for every query. For most organizations starting out, RAG provides faster value at lower initial investment.

Which approach reduces AI hallucination more?

RAG reduces hallucination more effectively for factual queries. Because RAG grounds every response in retrieved documents, the model synthesizes from provided content rather than generating from internal patterns — reducing hallucination rates by up to 85% compared to baseline models. Fine-tuned models must recall facts from parameters and can hallucinate details that were not learned well during training. For factual accuracy on knowledge-intensive tasks, RAG consistently outperforms Fine-Tuning.

Can I use Fine-Tuning and RAG together?

Yes — and in 2026, this hybrid approach delivers the best results for complex enterprise applications. Fine-Tuning is used to establish consistent behavioral patterns — response format, tone, domain reasoning — while RAG provides dynamic, up-to-date factual information at inference time. Hybrid systems achieve 96% accuracy in recent benchmarks, outperforming both RAG-only at 89% and Fine-Tuning-only at 91%. The pattern is: Fine-Tuning teaches HOW to respond, RAG provides WHAT to respond with.

How long does Fine-Tuning take compared to RAG deployment?

RAG systems can typically be deployed in days using managed vector database services like Pinecone, Weaviate, or OpenAI embeddings. Fine-Tuning takes weeks to months — dataset curation alone can take weeks, training runs take hours to days depending on model size and dataset volume, and thorough evaluation adds additional time. If speed to production is a priority, RAG is almost always the better starting point.

Which approach is better for regulated industries?

RAG provides significantly stronger data governance for regulated industries. RAG enforces access controls at query time — sensitive documents remain in controlled repositories with audit trails tracking exactly what information was accessed for each response. Fine-Tuning bakes information into model weights, making it extremely difficult to remove specific data, control access, or demonstrate to auditors exactly what the model knows. For healthcare, finance, and legal applications with strict compliance requirements, RAG’s governance architecture is the clear winner.

What is the best starting point for a company new to AI?

For most organizations beginning their AI journey, RAG offers the fastest path to value with the lowest risk. It is forgiving of mistakes, easy to iterate on, and does not require deep machine learning expertise. Starting with a managed RAG implementation using an existing foundation model lets you build useful AI applications in days and learn what your specific use case actually requires before committing to the upfront investment of Fine-Tuning. As your application matures, Fine-Tuning can be added for components where consistency and style matter most.

10. Conclusion and Decision Framework

The Fine-Tuning vs RAG question does not have a single correct answer — the optimal choice depends entirely on your specific requirements, constraints, and use case. In 2026, the most effective AI applications use both techniques strategically: RAG for dynamic information retrieval and hallucination reduction, Fine-Tuning for deep behavioral consistency and domain expertise. The 96% accuracy achieved by hybrid systems demonstrates that the two approaches are better understood as complementary than competitive.

For most organizations starting out, the evidence clearly points to RAG first. It is faster to deploy, more forgiving of early mistakes, and requires no machine learning infrastructure. Once your use case is validated and your requirements are clear, layer in Fine-Tuning for the components where consistent style and deep domain expertise provide the most value. The initial choice does not lock you in — the best architecture emerges through experimentation and iteration based on real-world performance data.

Key Takeaways

• Fine-Tuning modifies model weights — knowledge is baked into the model itself through additional training on domain data

• RAG keeps the model unchanged — knowledge lives in an external vector database searched at inference time

• RAG wins on cost, speed, new data handling, hallucination reduction, source attribution, and data governance

• Fine-Tuning wins on style control, behavioral consistency, and deep domain expertise for stable knowledge bases

• Hybrid systems achieve 96% accuracy — higher than RAG-only at 89% or Fine-Tuning-only at 91%

• RAG reduces hallucination by up to 85% by grounding every response in retrieved documents rather than parameter recall

• For regulated industries, RAG provides far stronger governance — sensitive data stays in controlled repositories

• For most organizations starting with AI, RAG is the recommended first step — faster, cheaper, and lower risk

• In 2026, automated routing platforms like LangChain now intelligently combine both approaches per query for optimal results

Quick Recommendations

Start Here — Best for Most Teams:

• Begin with a managed RAG implementation using Pinecone, Weaviate, or OpenAI embeddings — production-ready in days with no ML expertise required

• Use a foundation model like Claude or GPT-4 as your base — no Fine-Tuning needed until you have validated your use case and data requirements

Upgrade Path — When to Add Fine-Tuning:

• Add Fine-Tuning once your RAG system is working and you identify specific style, format, or behavioral consistency gaps that prompt engineering cannot reliably solve

• Consider Fine-Tuning when you need a smaller, faster model to match large model performance on a specific narrow task at scale

Enterprise Scale — Building the Hybrid:

• Use LangChain or LlamaIndex to implement automated routing that decides per query whether to use RAG, Fine-Tuning, or both based on query characteristics

• Track hallucination rates, factual accuracy, and response consistency separately for RAG and Fine-Tuned components to optimize each independently

Fine-Tuning vs RAG Action Plan — Start Today

1. TODAY: Define your use case requirements across the 8 decision factors — upfront cost, deployment speed, factual accuracy, new data frequency, style control, hallucination tolerance, governance, and target task

2. DAY 2: Use the decision tree in Figure 4 to determine whether RAG, Fine-Tuning, or Hybrid is the right architecture for your specific situation

3. WEEK 1: Set up a proof-of-concept RAG system using a managed vector database service and an existing foundation model to validate your use case before committing to Fine-Tuning

4. WEEK 2: Evaluate your RAG proof-of-concept against your accuracy, latency, and cost requirements and identify specific gaps that Fine-Tuning might address

5. MONTH 1: If Fine-Tuning is warranted, begin dataset curation — the quality of your training data is the single most important factor in Fine-Tuning success

6. ONGOING: Track LLM Visibility Score, hallucination rates, and accuracy benchmarks monthly and follow TechieHub.blog for the latest AI architecture strategy updates

The right AI architecture is not the most complex one — it is the one that solves your specific problem most reliably. Start with RAG, validate your requirements, and build toward the hybrid that delivers exactly the performance your use case demands.